Can you use ECS without DevOps

Use your imagination and assume that some team was encouraged to migrate from Azure AppService. It was a decision at a high level, so the small dev team needs to migrate into AWS. What's the issue you can ask? They have no experience with Amazon Web Services, also they don’t have a "DevOps" person. The app is a JAR file. AppService allowed them to put a file into the service and run it on production, without any issues. When they started looking for an alternative on the AWS side, the only solution they found was Elastic Beanstack, unfortunately, the workload fits better into the "docker platform". ECS from another hand doesn’t provide "drop-in" functionality. And that's the case. Let's check how easily we can build the bridge!

Tools used in this episode

- Java

- ECS

- CloudFormation(again!)

- Github Action

Rules

- As a starting point let's assume that we can use random Java app, as it's not a today's topic. Maybe you know, or not. There is great site with

HelloWorldapps. - We can't write Dockerfiles (our devs has no idea about it!)

- CloudFormation scripts - we must fit into 3-tier architecture model.

- CICD should be very simple to setup and use, as developers has no time for it.

Containers

As an example, I decided to use openliberty-realworld-example-app written by

OpenLiberty. The app is a standard Maven project, wasn’t updated for the last 2 years, and it's REST at the end, also there is no Dockerfile. That means I will need to write one for comparison purposes.

Next, I started looking for a "container generator" - an app or service, which can easily build a secure container for us. I found a solution called BuildPacks. It can probably generate a docker image with one, maybe two commands. First, we need to install pack, a CLI tool. Documentation can be found here. The first action besides pack --version, was checking something, available builders. What is it, builder?

It's a container that contains all dependencies, for building final images. The funny thing is that we can even write our builder, in the end, is a similar solution to RedHat 's2i'. Which builders are available out of the box? At least a few.

1pack builder suggest

2Suggested builders:

3 Google: gcr.io/buildpacks/builder:v1 Ubuntu 18 base image with buildpacks for .NET, Go, Java, Node.js, and Python

4 Heroku: heroku/builder:22 Base builder for Heroku-22 stack, based on ubuntu:22.04 base image

5 Heroku: heroku/buildpacks:20 Base builder for Heroku-20 stack, based on ubuntu:20.04 base image

6 Paketo Buildpacks: paketobuildpacks/builder:base Ubuntu bionic base image with buildpacks for Java, .NET Core, NodeJS, Go, Python, Ruby, Apache HTTPD, NGINX and Procfile

7 Paketo Buildpacks: paketobuildpacks/builder:full Ubuntu bionic base image with buildpacks for Java, .NET Core, NodeJS, Go, Python, PHP, Ruby, Apache HTTPD, NGINX and Procfile

8 Paketo Buildpacks: paketobuildpacks/builder:tiny Tiny base image (bionic build image, distroless-like run image) with buildpacks for Java, Java Native Image and Go

Looks like we can use paketobuildpacks/builder:tiny as we have a Java app. That is why it's handy to use popular programming languages. Probably it will be supported everywhere.

Now as we have the builder chosen, let's can run the build command.

1pack build openliberty-realworld-example-app --tag pack --builder paketobuildpacks/builder:tiny

First note is just doesn’t work on Macbook M1, I mean maybe works, however, it just stacks. So I switched to my x86 workstation. In the beginning, it looks good, the app was built in 2 minutes and the docker size was acceptable at ~ 241MB. Also, I was able to run the container. In one word - success. Pack was able to detect the result filetype, and use the correct middleware for it. It's impressive!

Next, I created a "regular" Dockerfile and compared the size. The funny thing is that I needed to spend around one hour writing Dockerfile, for an unknown application.

1FROM maven:3.6.0-jdk-11-slim AS build

2COPY src /home/app/src

3COPY pom.xml /home/app

4RUN mvn -f /home/app/pom.xml clean package

5

6FROM tomcat:9.0.73-jre11

7

8COPY --from=build /home/app/target/realworld-liberty.war /usr/local/tomcat/webapps/

9EXPOSE 8080

Below you can see the size of the container images. Version with tag pack is 80MB smaller 25% less. That is impressive.

1|Image | Size|

2|---|---|

3|openliberty-realworld-example-app:manual | 291MB |

4|openliberty-realworld-example-app:pack | 211MB |

The next step was an execution of security scans with trivy.

1|Image | Low finding| Medium findings |

2|---|---|---|

3|openliberty-realworld-example-app:manual | 15 | 4 |

4|openliberty-realworld-example-app:pack | 3 | 0 |

Seems that image built with a pack is more secure, and smaller than the image built by me. So yes, I'm personally impressed. However in my opinion more complex app could produce a lot of new issues, which need many hours of digging and tweaking. However, If're using Java, Buildpacks should be able to handle your code, without serious issues. At least based on my short tests.

Platform

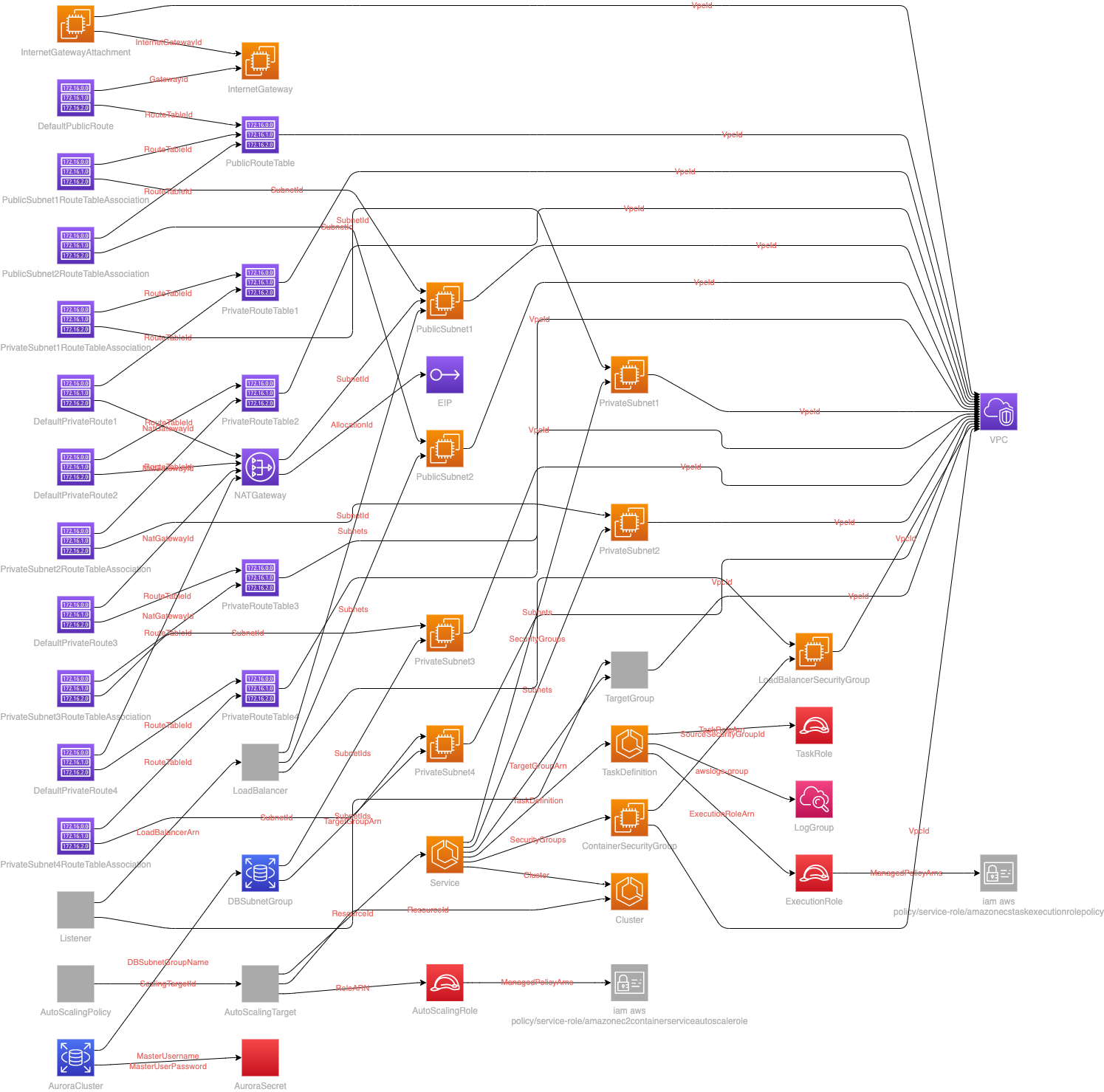

Now let me introduce ECS - Elastic Containers Services. It's Amazon's way of managing containers in the cloud. It's not Kubernetes or docker-compose. The best thing is that we can use Fargate(managed, pay-as-you-go compute) as the muscles of our solution. Based on that our infrastructure can be very cost-efficient. In our case, I will try to fit architecture into a corporate 3-tier model. Whole ~500 lines long file can be found here. Diagram was created with cfn-diagram. And it's awful.

|

|---|

| Great image, for showing on entry-level demos |

Deployment

If we wanted to easily deploy our application, the simplest way will be using CodeDeploy. However, in many organizations, CI/CD tool is an external solution without vendor lock. Let's focus then on an easy and generic way to update our code on QA and PROD, with some tests as well. For example, 5 years ago I did an interview task, those days there was a tool called silinternational/ecs-deploy. Let's see if we can skip it, as the plan is to use GitHub Action today!

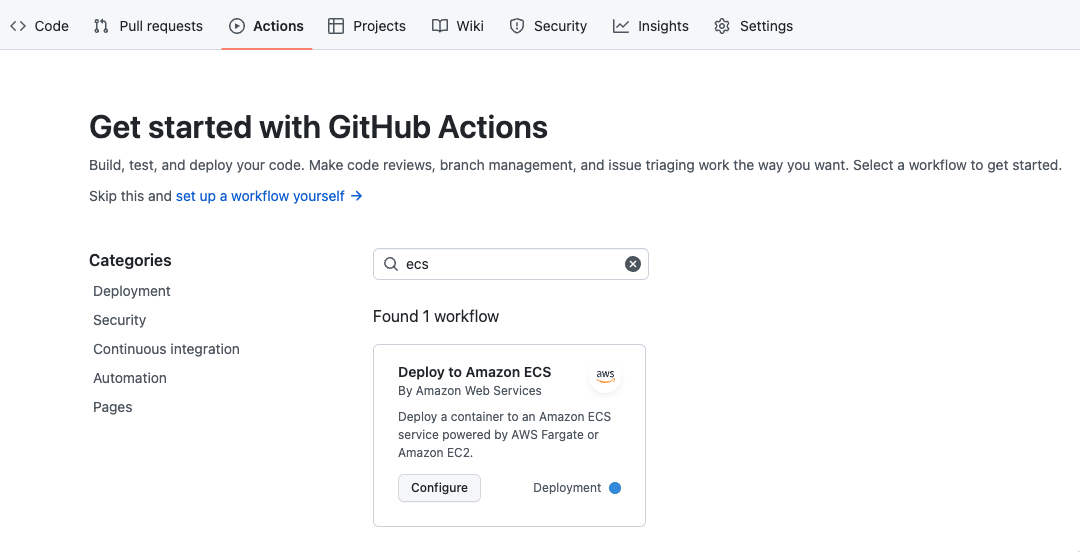

First impression

As it's a really nice tool. I started with the panel and build-in or rather pre-created actions.

|

|---|

| Simple deployment example |

After that, I was a bit shocked. I received an almost complete pipeline, yes it's simple, but ready to use and it's a good starting point

1# This workflow will build and push a new container image to Amazon ECR,

2# and then will deploy a new task definition to Amazon ECS, when there is a push to the "main" branch.

3#

4# To use this workflow, you will need to complete the following set-up steps:

5#

6# 1. Create an ECR repository to store your images.

7# For example: `aws ecr create-repository --repository-name my-ecr-repo --region us-east-2`.

8# Replace the value of the `ECR_REPOSITORY` environment variable in the workflow below with your repository's name.

9# Replace the value of the `AWS_REGION` environment variable in the workflow below with your repository's region.

10#

11# 2. Create an ECS task definition, an ECS cluster, and an ECS service.

12# For example, follow the Getting Started guide on the ECS console:

13# https://us-east-2.console.aws.amazon.com/ecs/home?region=us-east-2#/firstRun

14# Replace the value of the `ECS_SERVICE` environment variable in the workflow below with the name you set for the Amazon ECS service.

15# Replace the value of the `ECS_CLUSTER` environment variable in the workflow below with the name you set for the cluster.

16#

17# 3. Store your ECS task definition as a JSON file in your repository.

18# The format should follow the output of `aws ecs register-task-definition --generate-cli-skeleton`.

19# Replace the value of the `ECS_TASK_DEFINITION` environment variable in the workflow below with the path to the JSON file.

20# Replace the value of the `CONTAINER_NAME` environment variable in the workflow below with the name of the container

21# in the `containerDefinitions` section of the task definition.

22#

23# 4. Store an IAM user access key in GitHub Actions secrets named `AWS_ACCESS_KEY_ID` and `AWS_SECRET_ACCESS_KEY`.

24# See the documentation for each action used below for the recommended IAM policies for this IAM user,

25# and best practices on handling the access key credentials.

26

27name: Deploy to Amazon ECS

28

29on:

30 push:

31 branches: [ "main" ]

32

33env:

34 AWS_REGION: MY_AWS_REGION # set this to your preferred AWS region, e.g. us-west-1

35 ECR_REPOSITORY: MY_ECR_REPOSITORY # set this to your Amazon ECR repository name

36 ECS_SERVICE: MY_ECS_SERVICE # set this to your Amazon ECS service name

37 ECS_CLUSTER: MY_ECS_CLUSTER # set this to your Amazon ECS cluster name

38 ECS_TASK_DEFINITION: MY_ECS_TASK_DEFINITION # set this to the path to your Amazon ECS task definition

39 # file, e.g. .aws/task-definition.json

40 CONTAINER_NAME: MY_CONTAINER_NAME # set this to the name of the container in the

41 # containerDefinitions section of your task definition

42

43permissions:

44 contents: read

45

46jobs:

47 deploy:

48 name: Deploy

49 runs-on: ubuntu-latest

50 environment: production

51

52 steps:

53 - name: Checkout

54 uses: actions/checkout@v3

55

56 - name: Configure AWS credentials

57 uses: aws-actions/configure-aws-credentials@v1

58 with:

59 aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

60 aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

61 aws-region: ${{ env.AWS_REGION }}

62

63 - name: Login to Amazon ECR

64 id: login-ecr

65 uses: aws-actions/amazon-ecr-login@v1

66

67 - name: Build, tag, and push image to Amazon ECR

68 id: build-image

69 env:

70 ECR_REGISTRY: ${{ steps.login-ecr.outputs.registry }}

71 IMAGE_TAG: ${{ github.sha }}

72 run: |

73 # Build a docker container and

74 # push it to ECR so that it can

75 # be deployed to ECS.

76 docker build -t $ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG .

77 docker push $ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG

78 echo "image=$ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG" >> $GITHUB_OUTPUT

79

80 - name: Fill in the new image ID in the Amazon ECS task definition

81 id: task-def

82 uses: aws-actions/amazon-ecs-render-task-definition@v1

83 with:

84 task-definition: ${{ env.ECS_TASK_DEFINITION }}

85 container-name: ${{ env.CONTAINER_NAME }}

86 image: ${{ steps.build-image.outputs.image }}

87

88 - name: Deploy Amazon ECS task definition

89 uses: aws-actions/amazon-ecs-deploy-task-definition@v1

90 with:

91 task-definition: ${{ steps.task-def.outputs.task-definition }}

92 service: ${{ env.ECS_SERVICE }}

93 cluster: ${{ env.ECS_CLUSTER }}

94 wait-for-service-stability: true

In general, it's a unexpected gift. Especially if you're not such familiar with all this piping stuff. What do we need to change? Only build part, we should use:

1 - name: Build, tag, and push image to Amazon ECR

2 id: build-image

3 container:

4 image: buildpacksio/pack:latest

5 env:

6 ECR_REGISTRY: ${{ steps.login-ecr.outputs.registry }}

7 IMAGE_TAG: ${{ github.sha }}

8 run: |

9 pack build $ECR_REGISTRY/$ECR_REPOSITORY --tag $IMAGE_TAG --builder paketobuildpacks/builder:tiny

10 pack ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG --publish

11 echo "image=$ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG" >> $GITHUB_OUTPUT

At the end, we can copy the whole sub-job as dev, and paste it before 'production job'. It wouldn’t be the cleanest pipeline, I have ever seen, but c'mon. The solution (without CloudFormation), was created in 30 minutes, according to the rule

Summary

Uff, to be host I spent most of the time building the CloudFormation template. During that time, I was also preparing a presentation for the AWS User Group. So in the end I become just more tired than I expected. What is the overall result? Quite impressive, I'm very happy with buildpack, which works well. The problem is that automagic solutions are hard to debug, If you run into some issues with packing, probably writing Dockerfile will be much faster.

What I can recommend then? Probably learning Dockers, even if it requires some time, it's a standard these days, which provides almost ultimate flexibility.

What about ECS? It's easy to set up (don't look at the template, it's overcomplicated), If you decided to go with console, or basic config it will be a 5-minute task. Especially if your application is rather simple, with one/two services included. Management of large, complex, multi-microservice setups, probably will be very annoying. Ah, I almost forgot. If you like open-source stuff and cutting-edge tech from KubeCon - don’t expect that. Besides that? If you have a small app, or two, probably it's a much better solution than EKS, or raw Kubernetes!